The Confluent Core

AI contributes; human decides. The partnership is genuine but asymmetric. The human is the one accountable for what ships — that accountability is structural, not aspirational.

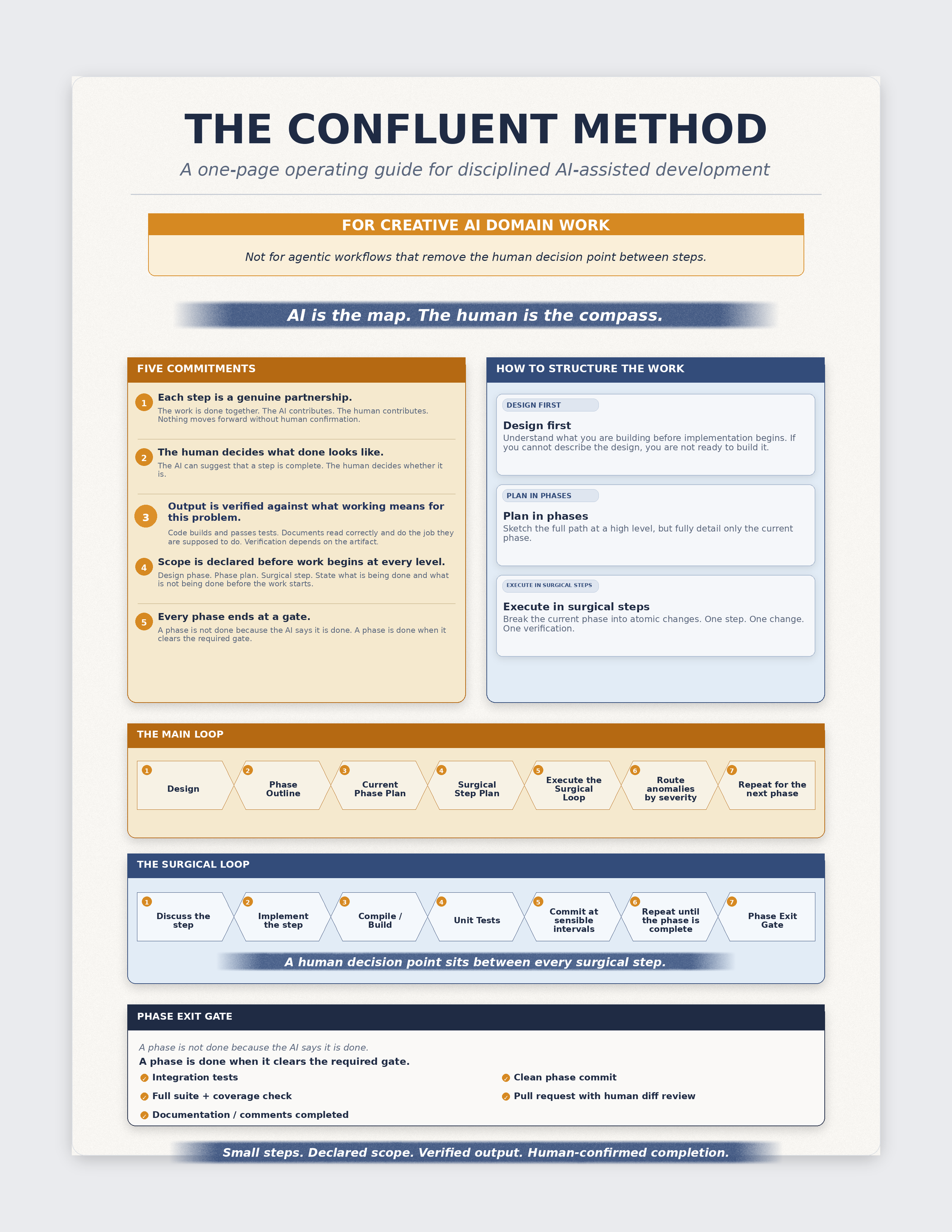

The Structured Process for AI-Assisted Development.

This guide provides the actionable methodology for disciplined AI-assisted development. Design first. Plan in phases. Execute in surgical steps. End every phase at a gate.

Every failure pattern in Human-Assisted AI has a structural cause: scope declared too loosely, verification treated as ceremonial, the human decision point made optional at the wrong moment. The Confluent Method is the answer to those structural causes — not a philosophy, but a working method.

Five commitments. A three-level structure of design, phase plan, and surgical step. A Main Loop that governs the phases and a Surgical Loop that governs every step. A Phase Exit Gate that defines what "done" means — not what the AI says is done, but what passes review, tests, coverage check, documentation, and a human diff.

Practitioners responsible for their team's AI-assisted work. People who already know AI-assisted development is fragile and want a concrete way to work — not just a philosophy, but a method with defined steps, a defined gate, and a defined human role at every meaningful decision point.

It is also the companion read for any engineer who finished Human-Assisted AI and asked: once I can name the patterns, what do I actually do differently?

There is a third option: keep using AI for the creative work it is good at, but structure the work so the human confirmation point is load-bearing, not ceremonial. Most AI-assisted workflows implicitly treat the human as a reviewer of a fait accompli. The Confluent Method makes the human decision point structural — it cannot be skipped, rushed, or delegated to a linter.

AI is the map. The human is the compass. From The Confluent Method

The difference between a team whose AI-assisted work ships dependably and one whose AI work keeps surprising them at review is not model choice. It is whether scope is declared at three levels before work begins, and whether "done" is defined somewhere other than the model's confidence.

AI contributes; human decides. The partnership is genuine but asymmetric. The human is the one accountable for what ships — that accountability is structural, not aspirational.

Each step is a partnership. The human decides what done looks like. Output is verified against what working means for this problem. Scope is declared before work begins at every level. Every phase ends at a gate.

Three nested levels of scope. You cannot skip levels. Design first, phase in stages, execute in surgical steps. Scope declared at each level before the next level begins.

Seven steps each. The Surgical Loop's step 5 is the human decision point — the moment where the engineer evaluates the output and decides whether to proceed. It is not optional.

A phase is not done because the AI says it is. A phase is done when it passes integration tests, coverage check, documentation review, clean phase commit, and human diff review. The gate is non-negotiable.

A single page that captures the operating model: the Five Commitments, the three-level structure, and the two loops. Not a summary — a working reference. Built to sit above the workstation and be consulted mid-session, not just during onboarding.

Print this. Pin it above the workstation. The card is the method compressed to a single reference surface — every element of it corresponds to a chapter in the book, but none of it requires the book to be useful mid-session.

The method is built for Creative AI Domain work — where the AI produces an artifact and a human evaluates it for quality, judgment, or correctness. It does not extend cleanly to agentic workflows that remove the human decision point between steps. The Halocline is the companion work that names the boundary and explains why. If you are working in a context where autonomous pipelines execute sequences of AI decisions without a human at each gate, read The Halocline before applying this method.

Teams stop arguing about "what counts as done" because the Phase Exit Gate defines it. Review discipline stops relaxing after a run of good output because the human decision point is structural, not optional. Scope drift stops happening at the edges because scope is declared at three levels before work begins.

The method does not add ceremony for its own sake. Every element has a structural reason — the loop catches the patterns named in Human-Assisted AI, the gate prevents them from shipping, and the three levels keep scope contained at every level of work.

The recognition layer — eight failure modes, named and described.

The engineering philosophy underneath the method.

The boundary between CAID and OAID — and why it matters for this method.

View the first four pages here. Submit your name and email to reveal the full publication PDF.

Russo, P. (2026). The Confluent Method: The Structured Process for AI-Assisted Development. Riverbend Consulting Group. https://doi.org/10.5281/zenodo.19597599