Two domains, not one

CAID: AI produces artifacts, humans evaluate. OAID: AI executes defined operations toward known outcomes. Same technology; different discipline requirements; not interchangeable.

The Invisible Boundary Between Two Kinds of AI Work.

There are two fundamentally different kinds of AI work, and the industry treats them as one. This guide introduces the boundary between the Creative AI Domain and the Operational AI Domain and makes that boundary actionable.

Most practitioners doing AI-assisted work eventually run into the same question: what about agentic AI? What happens when the pipeline removes the human from between the steps? The field has been treating that question as an edge case. It is not an edge case. It is a different kind of work, and it requires a different discipline.

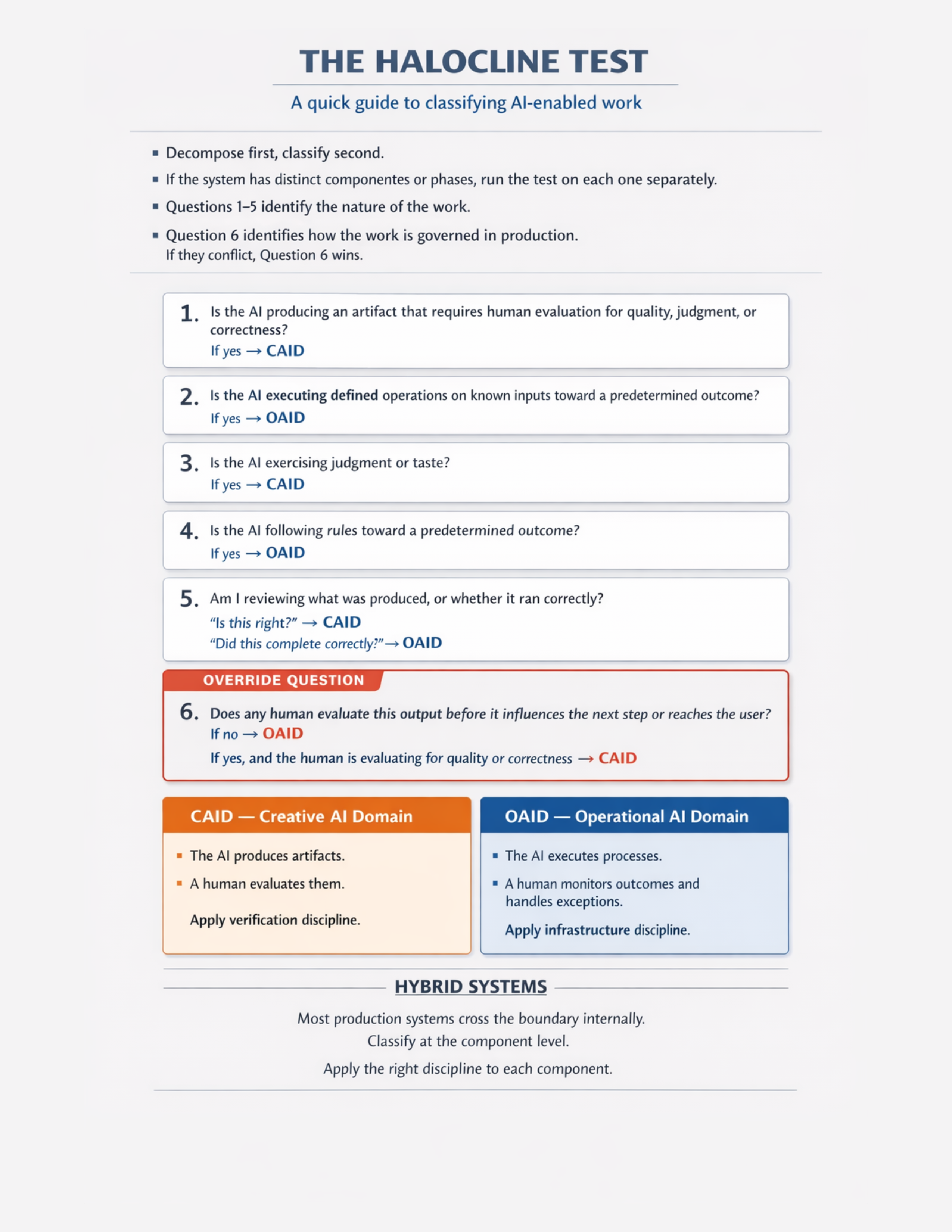

The Halocline names the boundary between the Creative AI Domain (CAID) and the Operational AI Domain (OAID). It gives practitioners a practical classification tool — the Halocline Test — so teams can identify which kind of AI work a given component involves and apply the right discipline to it.

Architects, directors, engineering leaders, and practitioners already doing AI-assisted work who have run into the same recurring question: what about agentic AI? This book is the answer to that question. It is not a general introduction to autonomous systems — it is a classification tool for engineers who already understand the CAID space and need a way to reason about what happens when the human is removed from the loop.

Most production systems are hybrids. Some parts are creative and need human judgment. Other parts are operational and need infrastructure rigor. Teams that treat these as one thing apply the wrong discipline everywhere — heavy-handed verification on routine execution, loose oversight on creative judgment. The Halocline names the boundary so discipline can be applied correctly on each side.

The override question is the one that matters most in practice. From The Halocline

A single misclassified component can make an entire methodology look broken. A pipeline that should be operational gets verification-reviewed into friction. A creative judgment call that should have a human at the gate gets automated into an infrastructure pipe. The classification is not philosophical — it is the difference between applying a methodology correctly and applying it expensively to the wrong thing.

CAID: AI produces artifacts, humans evaluate. OAID: AI executes defined operations toward known outcomes. Same technology; different discipline requirements; not interchangeable.

Six questions. Five identify the nature of the work. The sixth is the override: does any human evaluate this output before it influences the next step? If no, OAID is in effect regardless of the other answers.

Most production systems cross the boundary internally. The classification is per-component, not per-product. A system can have CAID components and OAID components running side by side.

CAID applies verification discipline. OAID applies infrastructure discipline. They are not substitutes for each other. Applying CAID discipline to an OAID component does not make it safer — it makes it slower and still wrong.

The Confluent Method's guarantees depend on the human decision point between steps. Agentic pipelines remove that. The Halocline explains why, and opens OAID as frontier territory with its own discipline needs.

Six questions that classify any AI-enabled component. Five identify the nature of the work. The sixth is the override that resolves every ambiguous case. Print it, keep it in the design review, and apply it per-component — not per-product.

The override question is the one that matters most in practice. Every other question clarifies the nature of the work. The override is the one that binds — if no human evaluates the output before it influences the next step, the component is OAID regardless of how it was designed or labeled.

The vocabulary, discipline requirements, human role, and failure exposure are different on each side. Teams that hold both in view simultaneously can assign the right tooling and the right oversight to each component — rather than applying one methodology expensively across both.

Most production systems are hybrids. The classification is per-component. Apply the test to each component, not to the product as a whole, and apply the corresponding discipline to each side.

Teams stop arguing about agentic AI as an extension of the same methodology. Architects get a tool to tag each component and apply discipline accordingly. The "what about agents?" question gets a clean answer: they belong on the OAID side of the boundary, and OAID has its own discipline needs — many of which the field is still working out.

That frontier status is honest, not a failure. The Halocline does not pretend to define OAID discipline completely. It names the boundary clearly, opens the territory, and points practitioners toward the right questions — which is the prerequisite for the field producing the right answers.

The recognition layer — where the Failure Mode Catalog lives.

The operating method that applies on the CAID side of the boundary.

The engineering philosophy that makes AI mistakes isolable on either side.

View the first four pages here. Submit your name and email to reveal the full publication PDF.

Russo, P. (2026). The Halocline: The Invisible Boundary Between Two Kinds of AI Work. Riverbend Consulting Group. https://doi.org/10.5281/zenodo.19617437